The "cloud" has always been a misnomer — a marketing abstraction for a sprawling network of concrete warehouses, humming servers, and massive cooling systems. Behind every AI-generated image and every archived email lies a data center that is increasingly difficult to sustain. These facilities are not only land-intensive but voracious consumers of electricity and water, creating a growing tension between digital ambitions and the physical limits of the planet.

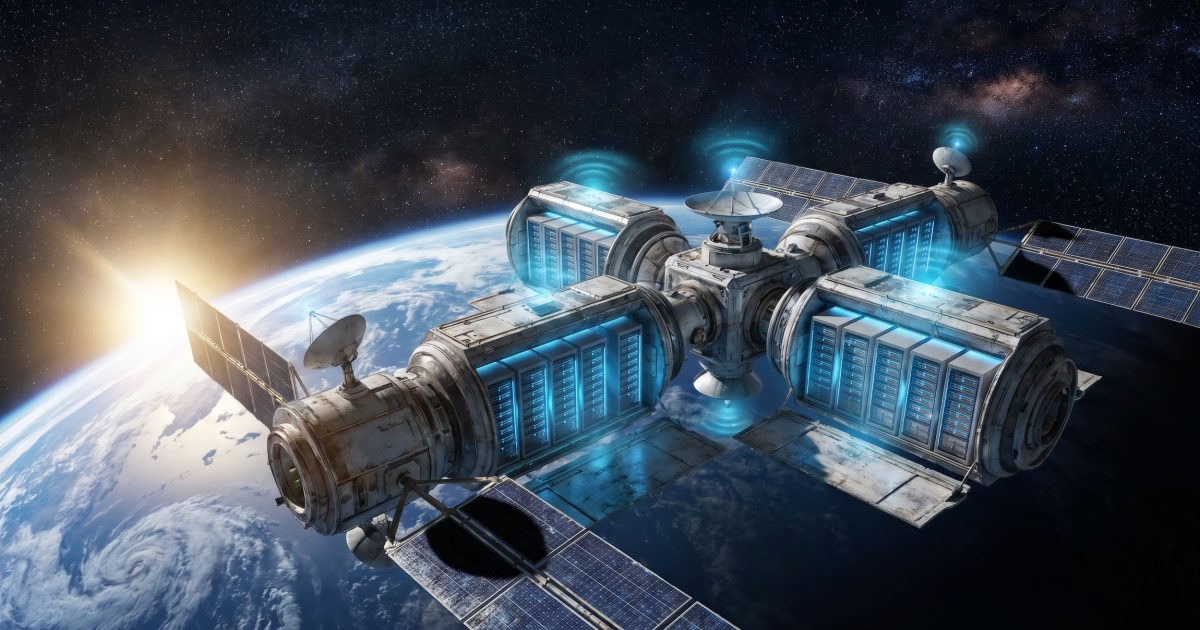

As the demand for compute power accelerates — driven largely by the rise of generative AI — the search for a release valve has led researchers and engineers to look upward. The proposal to move data centers into low Earth orbit is migrating from speculative thought experiment toward serious industrial consideration. In the vacuum of space, the cooling problem that plagues terrestrial facilities is solved passively through radiative heat dissipation, and proximity to the sun provides a nearly inexhaustible source of solar energy without competing for land or polluting local water tables.

The terrestrial bottleneck

The strain that data centers place on ground-level infrastructure is no longer a niche concern. Power grids in regions that host dense clusters of facilities — northern Virginia, the Netherlands, Ireland, parts of Scandinavia — have faced documented capacity constraints, with utilities in some cases delaying new grid connections. Cooling alone accounts for a substantial share of a data center's total energy draw, and the water consumed by evaporative cooling towers has become a point of friction with local communities and regulators.

The problem compounds as workloads grow heavier. Training and running large language models requires orders of magnitude more compute than the search queries and streaming video that defined the previous era of internet infrastructure. Each generation of frontier AI models demands larger clusters of GPUs running for longer periods, and the facilities housing them must scale accordingly. Land use, permitting timelines, and grid interconnection queues all introduce friction that slows the buildout of terrestrial capacity.

This is the context in which orbital data infrastructure has gained a hearing. The concept is not entirely new — satellite communications and on-orbit computing have existed in rudimentary forms for decades — but the economics of space access have shifted. The cost per kilogram to low Earth orbit has fallen dramatically over the past fifteen years, driven by reusable launch vehicles. That cost curve, if it continues, changes the calculus for placing heavy hardware above the atmosphere.

Engineering tradeoffs in orbit

The theoretical advantages are straightforward: passive cooling in the vacuum of space, uninterrupted solar exposure unfiltered by atmosphere, and zero competition for terrestrial land or freshwater. But the engineering tradeoffs are substantial. Latency is the most immediate concern. Data traveling between an orbital facility and end users on the ground must traverse significant distance, introducing delays that may be acceptable for archival storage or batch processing but problematic for real-time applications. Maintenance presents another challenge: hardware failures that a technician could resolve in minutes on the ground become far more complex when the server rack is orbiting at several hundred kilometers altitude.

There is also the question of orbital debris. Low Earth orbit is already congested with satellites and fragments from previous missions. Adding large-scale infrastructure increases collision risk and raises governance questions that no existing regulatory framework fully addresses. The Outer Space Treaty of 1967 and subsequent agreements were not drafted with commercial data center constellations in mind.

Still, the concept does not require an all-or-nothing commitment. A more plausible near-term path involves offloading specific workload categories — cold storage, asynchronous AI training, disaster-recovery backups — where latency tolerance is high and energy intensity is the binding constraint. Terrestrial facilities would continue to handle latency-sensitive tasks while the most power-hungry operations migrate upward.

The tension at the center of this idea is structural. Digital infrastructure is growing faster than the terrestrial systems that power it, and no single solution — renewable energy, nuclear microreactors, immersion cooling, orbital offloading — resolves the mismatch alone. Whether space-based data centers become a meaningful part of the answer depends on launch economics, regulatory frameworks that do not yet exist, and whether the engineering community can solve latency and maintenance problems at scale. The forces pulling in both directions — urgent demand for compute on one side, formidable logistical barriers on the other — are unlikely to resolve quickly. How they interact over the next decade will shape not just the data center industry but the physical geography of the internet itself.

With reporting from L'ADN.

Source · L'ADN