The Intellectual Friction of Changing Your Mind

In contemporary discourse, the act of changing one's mind is frequently characterized as "flip-flopping" — a perceived sign of weakness or intellectual instability. Politicians who revise a position face attack ads. Public figures who update a stance risk accusations of opportunism. Consistency, in this framing, functions as a proxy for integrity. Yet the equation between rigidity and reliability deserves scrutiny, particularly as the cognitive sciences continue to map the mechanisms behind belief persistence.

The resistance to revising one's views is not merely a cultural preference. It is rooted in the architecture of human cognition. When confronted with evidence that contradicts an established belief, the brain often generates a stress response comparable to what it produces under physical threat — a phenomenon well documented in research on cognitive dissonance, the theory first articulated by psychologist Leon Festinger in the 1950s. The discomfort is real, measurable, and deeply discouraging of further inquiry. Rather than sit with the tension, most people resolve it by discounting the new information, reinforcing the original position, or avoiding the source of contradiction altogether.

Why the Brain Defends Its Priors

The tendency to protect existing beliefs is not irrational in evolutionary terms. For most of human history, rapid consensus within a group carried survival advantages. Changing one's assessment of a threat or an ally mid-course could introduce dangerous hesitation. The cognitive shortcuts that kept early humans alive — confirmation bias, motivated reasoning, in-group conformity — persist in modern brains navigating a radically different information environment.

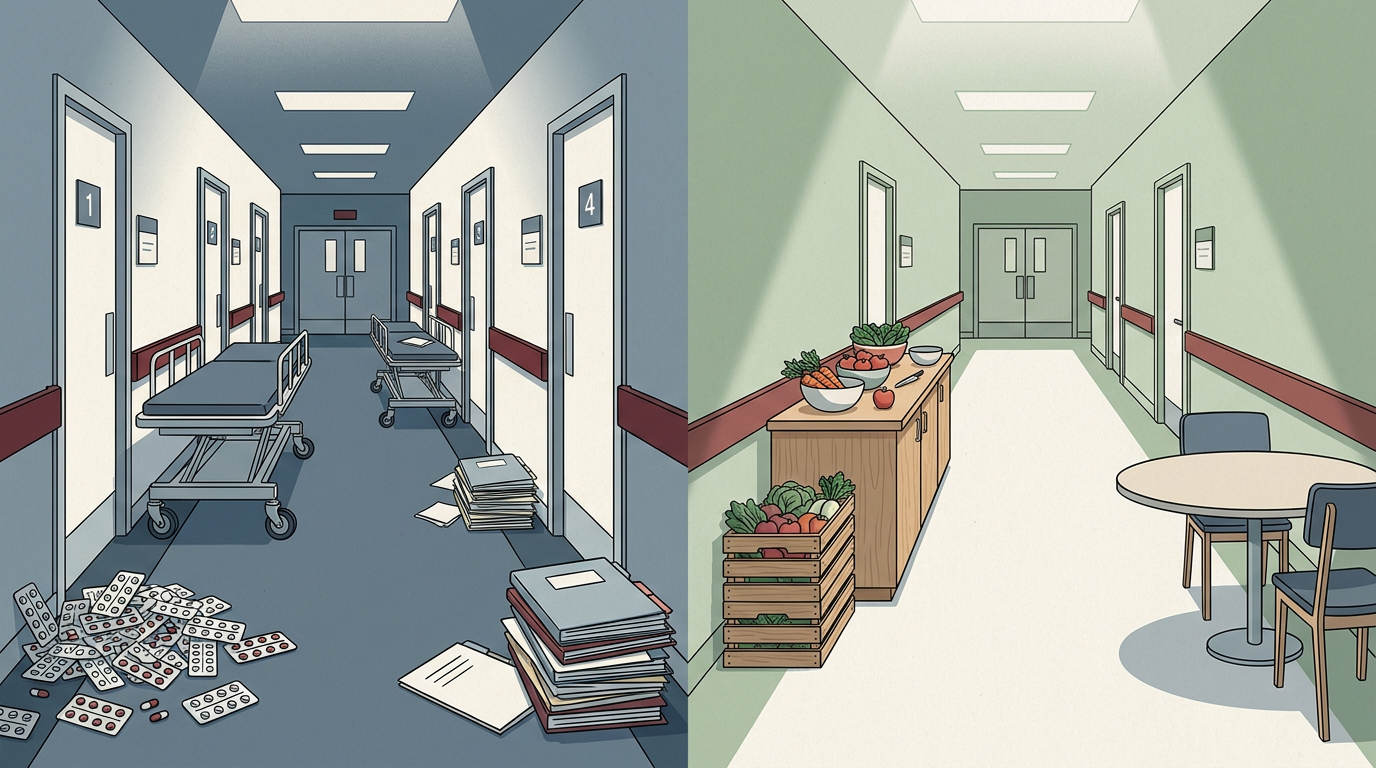

The problem is one of mismatch. The heuristics designed for small-group survival now operate in a landscape of infinite data streams, competing narratives, and accelerating complexity. In such an environment, the cost of clinging to outdated mental models rises sharply. A belief formed under one set of conditions may become actively misleading as circumstances shift. Yet the psychological machinery that guards those beliefs remains calibrated for a simpler world.

This dynamic plays out visibly in public life. Political polarization, resistance to scientific consensus on issues like climate or public health, and the entrenchment of online communities around fixed narratives all reflect, in part, the brain's preference for coherence over accuracy. The discomfort of updating a worldview is not trivial — it can feel like an identity threat, particularly when beliefs are tied to group membership or personal history.

Intellectual Humility as a Practiced Skill

A growing body of work in psychology frames the capacity to revise one's beliefs not as a personality trait but as a skill — one that can be developed through deliberate practice. The concept often invoked is "intellectual humility," defined broadly as the recognition that one's knowledge is limited and potentially fallible. Columnist David Robson has noted that this quality correlates with better decision-making and more resilient interpersonal relationships, suggesting that the benefits extend well beyond abstract epistemology.

The practical strategies for cultivating such flexibility are deceptively simple. Actively seeking the strongest version of an opposing argument — a practice sometimes called "steelmanning" — can reduce the reflexive dismissal that cognitive dissonance encourages. Separating one's identity from one's positions makes revision feel less like self-betrayal. Treating beliefs as working hypotheses rather than fixed commitments lowers the psychological stakes of being wrong.

None of this implies that all positions deserve equal weight, or that changing one's mind is inherently virtuous regardless of direction. The goal is not perpetual uncertainty but calibrated confidence — holding views with a degree of conviction proportional to the evidence supporting them, and adjusting when that evidence shifts.

The tension, then, is structural. On one side sits the brain's deep preference for consistency, reinforced by social norms that reward steadfastness. On the other sits an information environment that changes faster than any fixed worldview can accommodate. Whether institutions — educational, political, media — can be redesigned to reward epistemic flexibility rather than punish it remains an open question, and one with consequences that extend far beyond individual cognition.

With reporting from New Scientist.

Source · New Scientist