The conflict in Ukraine has, over successive phases, become the most consequential testing ground for military technology since the early years of precision-guided munitions. The latest chapter centers on autonomy. Confronted by persistent Russian electronic warfare — which can sever the radio links operators rely on to guide first-person-view (FPV) drones to their targets — Kyiv has mobilized a decentralized network of over 200 domestic technology companies to embed artificial intelligence directly into its drone fleet. The ambition is straightforward in concept and formidable in execution: build unmanned systems that can identify, track, and strike targets with minimal or zero human input once a communication link is lost.

FPV drones, small quadcopters steered in real time through a video feed, became a defining weapon of the war's middle period. They are cheap, fast to manufacture, and devastatingly effective against armored vehicles and infantry positions. But their dependence on continuous radio contact created a vulnerability that Russian forces learned to exploit through GPS spoofing and broadband jamming. The push toward onboard autonomy is, in essence, an engineering answer to an electronic-warfare problem: if the link goes down, the drone must be able to finish its mission alone.

A Decentralized Arms Industry

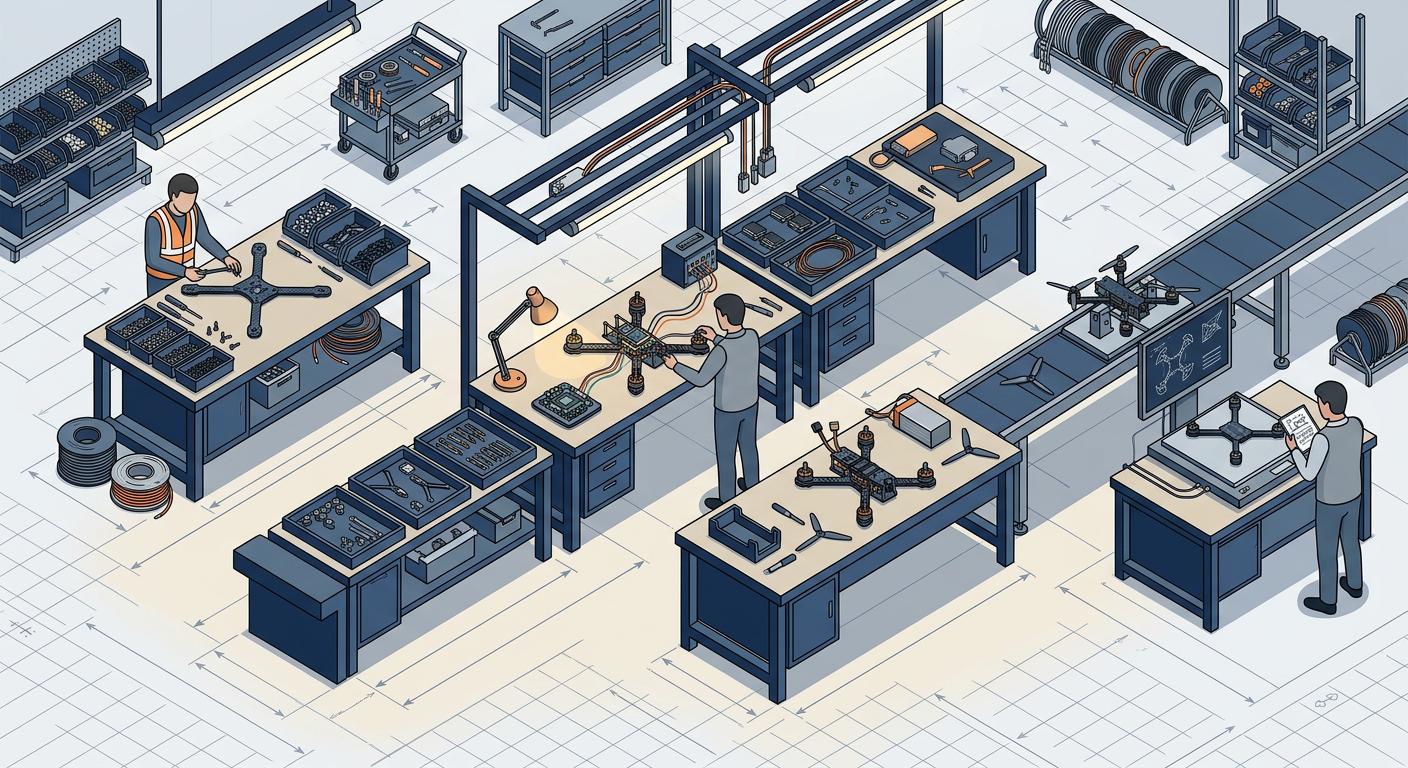

The structure Ukraine has adopted to pursue this goal is itself noteworthy. Rather than funneling development through a single state defense bureau, the country has encouraged a broad ecosystem of private firms — startups, software houses, established defense contractors — to compete and collaborate simultaneously. This mirrors, in wartime compression, the kind of innovation ecosystem that peacetime governments spend years trying to cultivate through grants and incubators.

The model carries distinct advantages. Multiple teams working on parallel approaches accelerate the cycle of iteration. Software updates can be pushed to fielded drones in days rather than months, a tempo more familiar to consumer technology than to traditional defense procurement. Hardware modifications — new sensor packages, lighter airframes, alternative guidance modules — can be tested in live conditions almost immediately. The feedback loop between the front line and the workshop is measured in weeks, not budget cycles.

It also carries risks. Quality control across hundreds of suppliers is difficult. Interoperability between systems from different manufacturers is not guaranteed. And the legal and ethical frameworks governing autonomous targeting — specifically, the degree of human oversight required before a strike — remain contested internationally, even as the technology races ahead of the debate.

Precedent and Implication

Global defense establishments are paying close attention, and not only to the hardware. The operational data emerging from Ukraine's autonomous drone deployments constitutes the largest real-world dataset on AI-assisted kinetic engagement ever assembled. For military planners in NATO capitals, in Beijing, and elsewhere, this information is invaluable: it reveals failure modes, effective countermeasures, and the practical limits of current computer-vision algorithms under battlefield conditions — dust, smoke, thermal distortion, camouflage.

The broader strategic implication extends beyond any single conflict. Ukraine's approach suggests a template for how smaller nations, or those with limited conventional forces, might offset asymmetries in manpower and materiel through rapid technological adaptation. The logic is not new — it echoes the way irregular forces have historically sought force multipliers — but the specific combination of commercial AI, cheap airframes, and decentralized production represents something qualitatively different.

What remains unresolved is where the line between autonomous capability and autonomous authority will settle. The technology to allow a drone to complete a strike without human confirmation already exists. The question of whether it should — under what rules of engagement, with what accountability mechanisms, subject to what international norms — is one that neither Ukraine's battlefield urgency nor the global arms-control architecture has yet answered. The tension between operational necessity and normative restraint is likely to define the next phase of this debate, shaped less by diplomatic conferences than by the engineering choices being made, under fire, right now.

With reporting from El Confidencial.

Source · El Confidencial — Tech