For years, Meta has maintained that its automated systems and human moderators constitute a robust defense against digital fraud. A new class-action lawsuit filed by the Consumer Federation of America (CFA) in Washington D.C. now challenges that narrative directly. The filing alleges that the company has systematically misled users about the prevalence of scam advertisements on Facebook and Instagram, prioritizing advertising revenue over consumer protection. If the claims hold up in court, the case could set a significant precedent for how social media platforms are held accountable for the commercial content they distribute.

The lawsuit highlights a recurring pattern of deceptive advertisements — ranging from promises of "free government iPhones" to fraudulent stimulus checks targeted at specific age groups. Many of these campaigns now leverage AI-generated video to lend a veneer of authenticity to their claims. According to the CFA, these are not isolated oversights but the predictable output of a platform architecture that treats every ad impression as revenue first and a potential consumer harm second.

The structural tension between ads and safety

The core allegation in the CFA's complaint is not simply that scam ads exist on Meta's platforms — no system operating at the scale of Facebook and Instagram can catch every bad actor in real time. The more pointed claim is that Meta has actively misrepresented the effectiveness of its safeguards while structuring its business in ways that make enforcement harder than it needs to be.

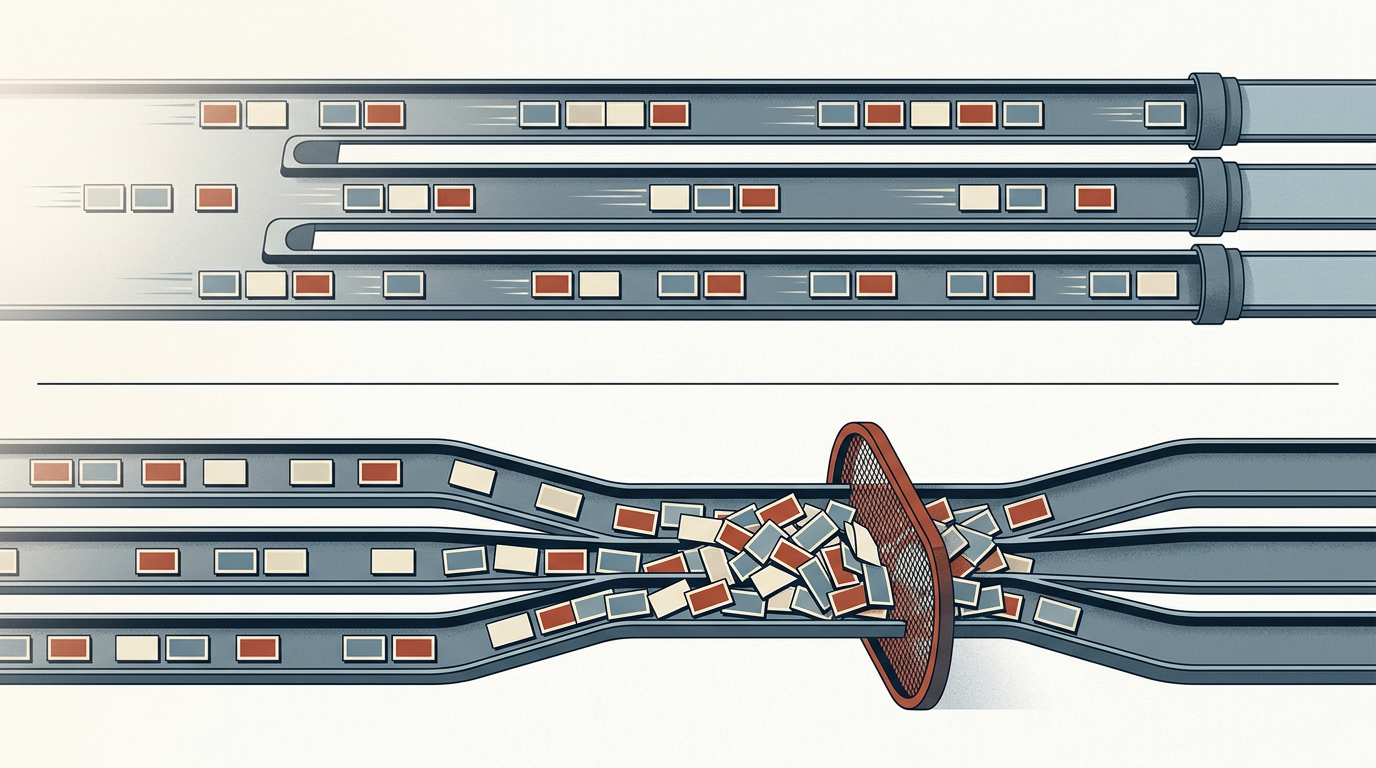

This tension is not new. The advertising-driven model that underpins Meta's business requires maximizing the volume and velocity of ad placements. Every additional layer of review introduces friction — slower ad approval, higher operational costs, and potentially fewer paying advertisers. For a company that derives the vast majority of its revenue from advertising, the incentive structure tilts toward permissiveness. The CFA's lawsuit frames this not as a bug but as a feature of Meta's commercial design.

The emergence of generative AI tools has sharpened the problem. Producing convincing fraudulent content — deepfake endorsements, synthetic voices, fabricated product demonstrations — is now cheaper and faster than at any point in the history of digital advertising. Platforms that rely on pattern recognition to flag suspicious content face an adversary that can iterate faster than detection models can adapt. The question the lawsuit implicitly raises is whether Meta has invested in countermeasures proportionate to the threat, or whether it has allowed the arms race to tip in favor of bad actors because doing so is less costly than the alternative.

A broader reckoning for platform liability

The CFA's legal challenge arrives at a moment when the regulatory and legal environment around platform accountability is shifting. In the United States, Section 230 of the Communications Decency Act has long shielded platforms from liability for user-generated content, but paid advertising occupies a different legal category. When a platform accepts payment to distribute content, the argument that it is merely a neutral intermediary becomes harder to sustain. The CFA's case appears to exploit this distinction, focusing on the commercial relationship between Meta and the advertisers who pay to reach its users.

Internationally, the European Union's Digital Services Act already imposes more explicit obligations on very large platforms to assess and mitigate systemic risks, including those arising from advertising. Whether or not the CFA lawsuit succeeds on its specific claims, it reflects a growing consensus among regulators and consumer advocates that the self-regulatory model — in which platforms police their own ad ecosystems and report their own performance — has produced insufficient results.

Previous reporting has indicated that Meta generates significant revenue from advertisements promoting banned goods and fraudulent schemes, and that internal processes have at times hindered employees' ability to purge malicious actors. If those accounts are accurate, they suggest a gap between Meta's public commitments and its operational reality — precisely the kind of gap that class-action litigation is designed to test.

The outcome will depend on what discovery reveals about Meta's internal decision-making: how it allocates resources between ad review and ad growth, what its own data shows about scam prevalence, and whether executives were aware of shortcomings they chose not to disclose. The tension between a platform's commercial imperatives and its duty of care to users is not unique to Meta, but few companies operate at comparable scale with comparable exposure. How this case unfolds will signal whether the legal system treats that scale as an excuse or as an aggravating factor.

With reporting from Engadget.

Source · Engadget