A year after integrating image generation directly into its flagship chatbot, OpenAI has released ChatGPT Images 2.0. The update introduces reasoning capabilities into the visual generation pipeline, allowing the model to cross-reference its outputs against web searches and apply spatial logic before rendering a final image. The company describes the release as a "step-change" in how generative models interpret complex instructions, handle dense text, and manage the relationship of objects within a frame. The rollout is currently underway for all ChatGPT users.

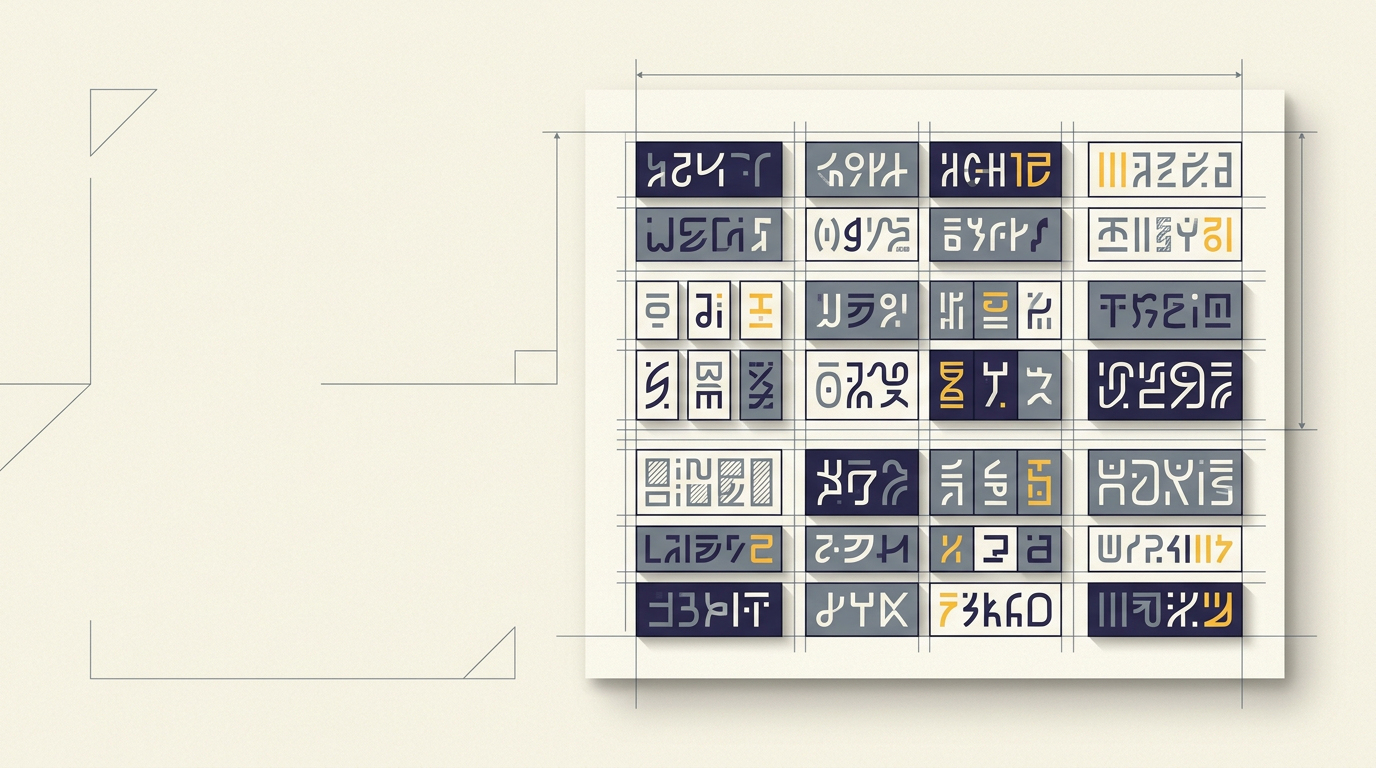

The update also targets a longstanding weakness of text-to-image systems: non-Latin script rendering. OpenAI reports substantial gains in Japanese, Korean, Chinese, Hindi, and Bengali typography, an area where previous models frequently produced garbled or incoherent characters. For a technology whose training data has historically skewed toward English-language and Western visual conventions, the improvement signals a deliberate effort to broaden the tool's addressable market.

Reasoning as a design principle

The most consequential element of the release is not any single feature but the architectural decision to embed reasoning into the image generation process itself. In earlier iterations of text-to-image tools — from OpenAI and competitors alike — the generation pipeline operated largely as a pattern-matching exercise: a prompt went in, a statistically plausible image came out, and the user either accepted the result or tried again. The feedback loop was external, dependent on human judgment.

ChatGPT Images 2.0 attempts to internalize part of that loop. By enabling the model to verify elements of its output against web-sourced information, OpenAI is applying a principle already familiar from its language models: chain-of-thought verification, where intermediate steps are checked before a final answer is produced. The extension of this logic to visual generation is nontrivial. Text outputs can be evaluated against factual databases with relative ease; images involve spatial coherence, typographic accuracy, and contextual appropriateness — dimensions that are harder to formalize as verification targets.

The practical implications are most visible in professional workflows. Storyboarding, game prototyping, and brand asset creation all require consistency across multiple outputs — the same character rendered from different angles, the same typeface maintained across frames. Creative drift, where successive generations subtly diverge from the original intent, has been a persistent friction point. A model capable of self-correction reduces the number of iterations a designer must run, compressing the gap between prompt and usable output.

The multilingual frontier

The emphasis on non-Latin scripts addresses a criticism that has followed generative AI since its consumer debut. Early diffusion models treated text within images as decorative texture rather than semantic content, producing convincing Latin characters but failing visibly with logographic and abugida writing systems. The problem was partly one of training data composition and partly one of architectural priority: rendering Chinese hanzi or Devanagari with precision demands a different kind of spatial awareness than rendering the Latin alphabet.

OpenAI's reported improvements in Japanese, Korean, Chinese, Hindi, and Bengali suggest that the company is investing in script-specific evaluation benchmarks — a necessary step if generative image tools are to function as genuine creative instruments outside the Anglophone world. The commercial logic is straightforward: markets in East Asia and South Asia represent hundreds of millions of potential users whose adoption depends on the tool producing culturally and linguistically accurate outputs.

The broader trajectory is worth watching. As reasoning capabilities migrate from text to image generation, the boundary between language models and creative tools continues to blur. The question is whether verification-augmented generation can scale to video, 3D rendering, and interactive media — domains where spatial and temporal coherence requirements are orders of magnitude more demanding. OpenAI's competitors, from Google DeepMind to emerging open-source projects, face the same technical frontier. Whether reasoning-first architectures become the industry standard or remain one approach among many will depend on how well they perform under the pressure of real creative workloads — a test that user adoption over the coming months will begin to answer.

With reporting from Olhar Digital.

Source · Olhar Digital