OpenAI has unveiled ChatGPT Images 2.0, a significant update to its image generation engine that signals a deliberate shift from creative experimentation toward professional utility. Where previous iterations of the tool often excelled at the surreal or the loosely illustrative, the new model is engineered for practical applications in marketing, presentations, and graphic design. The stated priority is precision — producing visual assets that require minimal post-production editing before they can be deployed in real workflows.

The update arrives at a moment when generative image tools are no longer curiosities. They are becoming embedded in the daily operations of design teams, content studios, and solo practitioners who lack the budget for dedicated illustrators. OpenAI's repositioning of its image engine reflects a broader industry consensus: the market for AI-generated images is migrating from consumer amusement to enterprise infrastructure.

Text Rendering and the Precision Gap

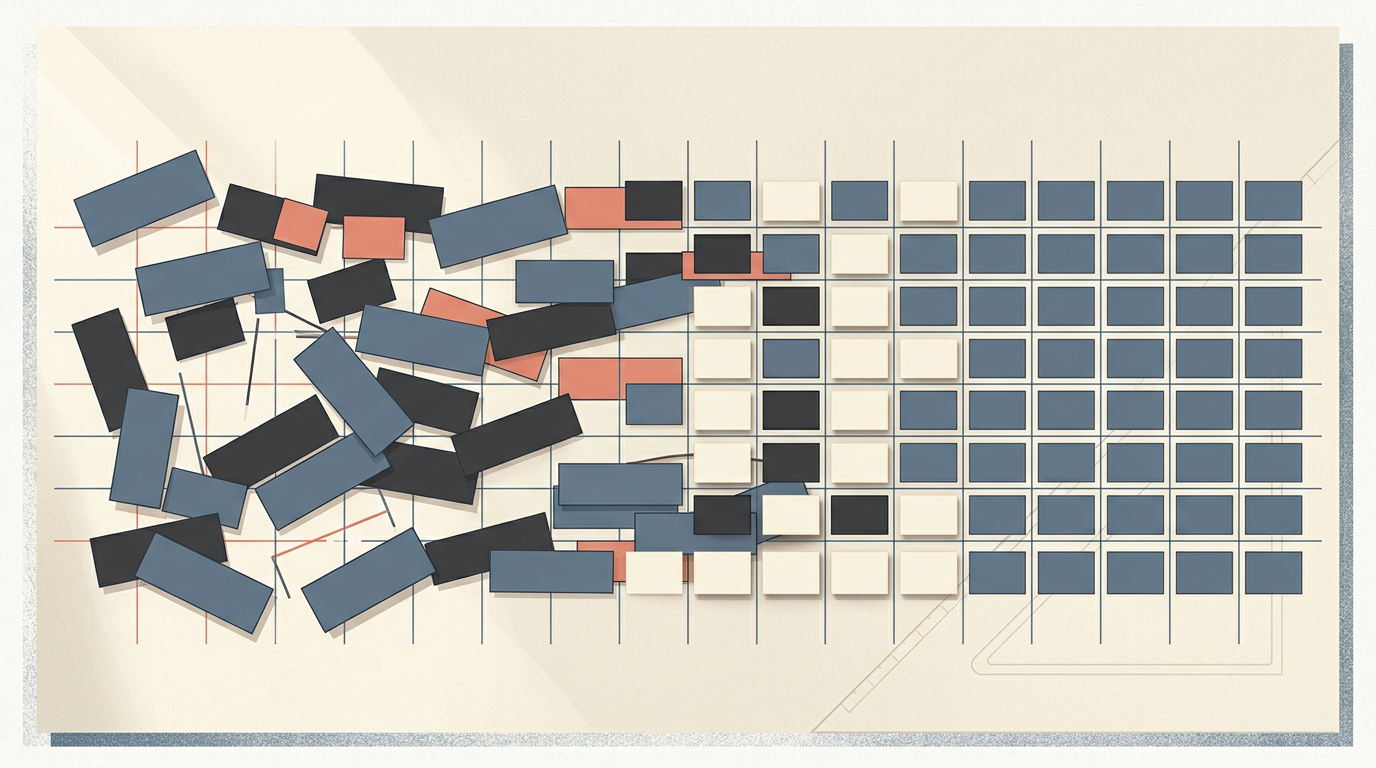

The most notable technical advancement in Images 2.0 lies in text rendering — a long-standing and stubborn hurdle for diffusion-based generative models. Since the early days of DALL·E and its competitors, producing legible, correctly spelled text within an image has been one of the clearest failure modes of the technology. Letters would warp, words would scramble, and any design requiring typographic accuracy was effectively off-limits.

By improving how characters, fonts, and spatial layouts are processed, OpenAI aims to close what might be called the precision gap: the distance between a generated draft and a usable final asset. If the gap is narrow enough, the tool stops being a brainstorming aid and starts functioning as a production engine — capable of outputting promotional banners, instructional diagrams, and social media cards that need little or no human correction. That transition, if it holds under real-world stress, changes the competitive calculus for design software incumbents and stock-image marketplaces alike.

While the update is accessible to all ChatGPT users, advanced "reasoning" capabilities — which allow the model to interpret more complex or multi-step prompts — remain exclusive to paid tiers, including Plus and Enterprise accounts. The tiered access model is consistent with OpenAI's broader monetization strategy: wide free distribution to build habit, premium features to capture revenue from power users and organizations.

Scale, Safety, and the Professional Stakes

The scale of adoption underscores why the professional pivot matters. OpenAI reports that users are generating over one billion images per week across its platform. At that volume, even marginal improvements in output quality translate into enormous shifts in how visual content is produced globally. A tool used at that frequency is no longer supplementary; it is part of the supply chain.

With greater capability, however, comes heightened scrutiny. During a press briefing, product lead Adele Li emphasized that the increase in fidelity does not come at the expense of safety protocols. The company maintains that its content guidelines are being expanded alongside the model's power, aiming to mitigate potential misuse — from deepfake-adjacent imagery to unauthorized brand replication. The tension between utility and guardrails is not new to generative AI, but it sharpens considerably when the output is polished enough to pass as professionally produced material.

The competitive landscape adds further pressure. Midjourney, Adobe Firefly, and Google's Imagen family have each staked claims in the professional-grade segment, with Adobe in particular leveraging its existing foothold in creative software to offer generation tools trained on licensed content. OpenAI's advantage is distribution — ChatGPT's massive user base provides a funnel that standalone image tools struggle to match. Whether distribution alone can sustain a lead over rivals with deeper creative-industry relationships remains an open question.

What is clear is that the axis of competition in generative imagery has shifted. The race is no longer about producing the most visually striking output. It is about producing the most usable one — and about who captures the workflow that follows.

With reporting from Tecnoblog.

Source · Tecnoblog