OpenAI has introduced ChatGPT Images 2.0, an update to its visual synthesis engine that incorporates the "reasoning" capabilities already present in its recent language models. Unlike its predecessors, which relied solely on static training data, the new system can access the live web to inform its creative process — verifying real-world details or current events before translating a text prompt into pixels. The result, according to the company, is a higher degree of contextual accuracy: an image of a building can reflect its actual facade, a chart can reference recent data, and a depiction of a public figure can avoid the uncanny mismatches that have plagued earlier generators.

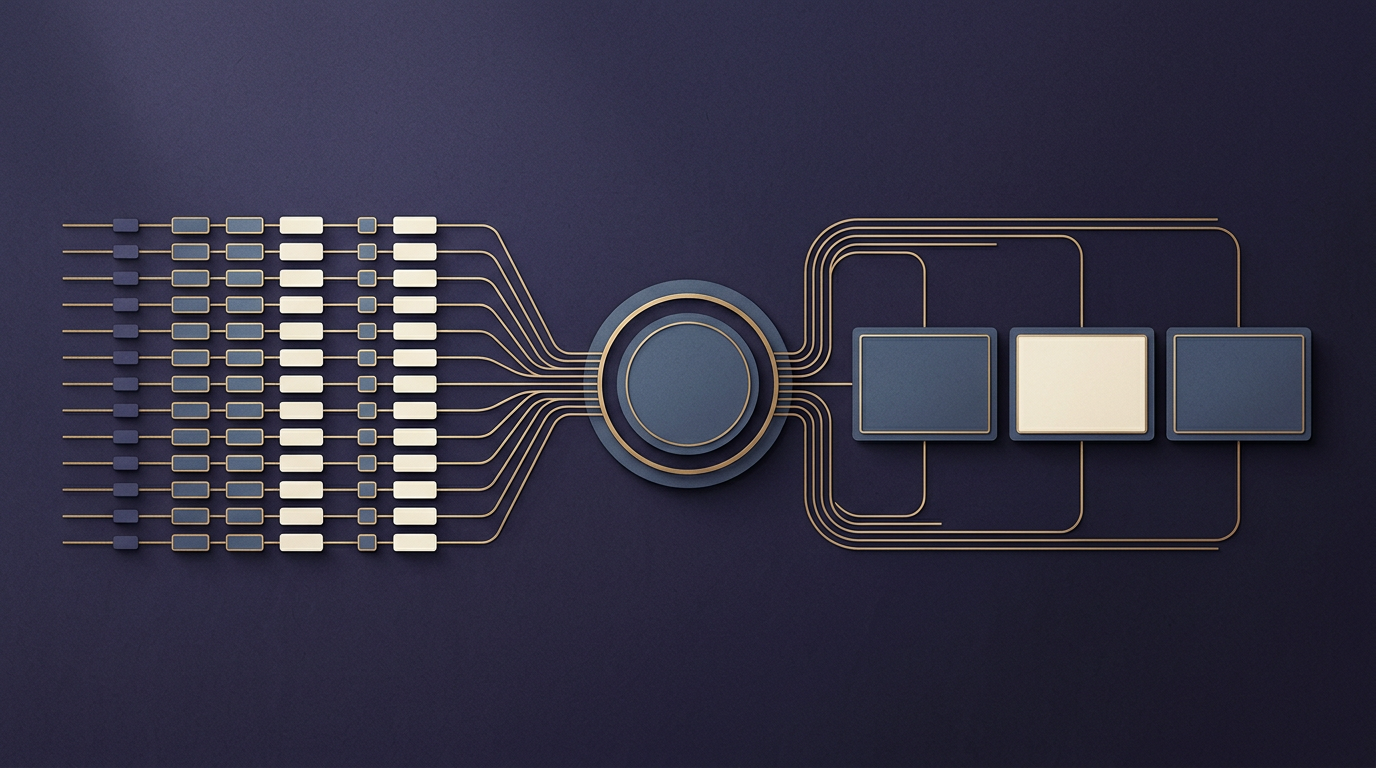

The update also introduces a more iterative approach to image generation. By applying "thinking" steps — a technique OpenAI first deployed in its o-series reasoning models — the system can produce a series of related images from a single prompt while maintaining visual consistency across the sequence. A third area of emphasis is what OpenAI calls "instruction following": the ability to adhere to complex, multi-part requests that often trip up less sophisticated models.

From one-shot generation to agentic workflows

The trajectory of AI image generation over the past several years has followed a recognizable arc. Early diffusion models, including OpenAI's own DALL·E and competitors such as Midjourney and Stability AI's Stable Diffusion, treated each prompt as a self-contained event: a user typed a sentence, the model returned an image, and any refinement required starting over or manually editing parameters. The results were often visually striking but unreliable in detail — misspelled text, incorrect object counts, anatomically implausible hands.

ChatGPT Images 2.0 represents a departure from that paradigm. By chaining reasoning steps before and during generation, the model mimics something closer to a human designer's workflow: research the subject, plan the composition, execute, then review. The addition of web search extends this logic further. Rather than relying exclusively on patterns learned during training — patterns that are frozen at a cutoff date — the system can pull in current information. For tasks that demand factual grounding, such as creating an infographic about a recent event or illustrating a product that launched last week, the difference is material.

This shift mirrors a broader trend across the AI industry. Several major labs have moved toward what researchers call "agentic" systems — models that do not merely respond to a prompt but take intermediate steps, use external tools, and verify their own outputs. Applying that architecture to image generation is a logical extension, though it raises its own set of questions about latency, cost, and the reliability of web-sourced information feeding into visual outputs.

The competitive and professional stakes

The update arrives at a moment when the market for AI-generated imagery is both expanding and fragmenting. Google, Meta, and a growing cohort of startups have each released or improved their own image models in recent months. Differentiation increasingly hinges not on raw visual quality — which has reached a baseline of competence across most major platforms — but on controllability, consistency, and integration into professional workflows.

OpenAI's emphasis on instruction following speaks directly to that competitive pressure. Designers, marketers, and product teams frequently need outputs that conform to precise specifications: exact color palettes, brand-consistent typography, accurate spatial relationships between objects. Models that cannot reliably execute such requests remain novelty tools rather than production instruments. By coupling reasoning with generation, OpenAI is making a bet that precision, not spectacle, will determine which platform captures professional spend.

There are tensions worth watching. Web search integration introduces a dependency on external sources whose accuracy the model cannot fully guarantee. Iterative generation increases computational cost at a time when inference economics are already a constraint for scaling AI services. And the line between a tool that "researches" a subject and one that reproduces copyrighted or sensitive material from the web remains legally and ethically unsettled.

What is clear is that the image generation market is entering a phase where the underlying technology matters less than the system architecture around it — the reasoning layers, the tool use, the feedback loops. Whether that architecture delivers on its promise of professional-grade reliability, or introduces new failure modes of its own, will shape the next chapter of the field.

With reporting from The Verge.

Source · The Verge