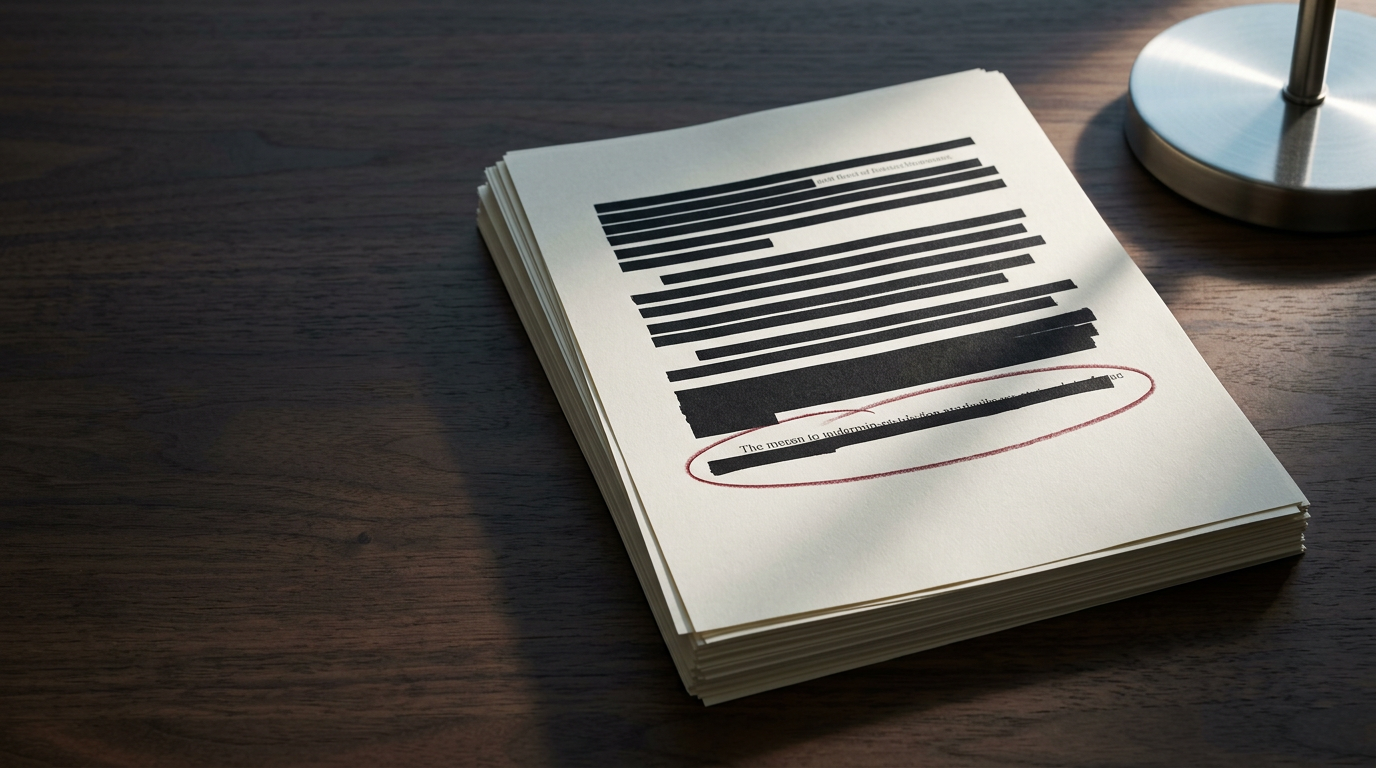

Sullivan & Cromwell, one of the most established law firms on Wall Street, has apologized to a U.S. Bankruptcy Court judge after a court motion it filed was found to contain inaccurate legal citations generated by artificial intelligence. The filing, submitted in the U.S. Bankruptcy Court for the Southern District of New York, included references that did not correspond to real case law — a phenomenon widely known in the AI field as "hallucination," in which a large language model produces plausible-sounding but fabricated outputs.

The incident is notable not because AI hallucinations in legal filings are new, but because of the firm involved. Sullivan & Cromwell sits at the top tier of American corporate law, regularly advising on the largest and most complex transactions and restructurings. That a firm of this caliber submitted a filing with fabricated citations underscores a structural problem that the legal profession has yet to resolve: the gap between the speed at which AI tools are being adopted and the rigor of the verification processes surrounding them.

A pattern the profession has struggled to break

The legal industry's reckoning with AI-generated hallucinations has been building for years. The most widely cited precedent came in 2023, when attorneys in a personal injury case before a federal court in New York submitted a brief containing fictitious case citations produced by ChatGPT. The presiding judge sanctioned the lawyers involved, and the episode became a cautionary tale across the profession. Since then, multiple courts have adopted standing orders requiring attorneys to disclose the use of AI in filings or to certify that all citations have been independently verified.

Despite these measures, the underlying risk has not diminished. Generative AI models are designed to produce fluent, contextually appropriate text, not to guarantee factual accuracy. Legal citation — which demands precision down to the volume number, page, and court — is precisely the kind of task where a model's tendency to fabricate coherent-looking but nonexistent references becomes most dangerous. The tools have improved, and legal-specific AI products have introduced citation-checking layers, but no system has eliminated the problem entirely.

The Sullivan & Cromwell episode suggests that even firms with extensive resources and sophisticated internal processes are not immune. Bankruptcy proceedings, which involve dense procedural histories and high financial stakes, place particular demands on accuracy. A fabricated citation in that context does not merely embarrass the filing party — it risks misleading the court on matters that affect creditors, debtors, and the integrity of the restructuring process.

The governance question firms cannot defer

The broader question raised by this incident is one of institutional governance. Law firms across the industry have moved to integrate AI into research, drafting, and document review workflows. The efficiency gains are real and, for competitive reasons, difficult to forgo. But the frameworks governing how these tools are used — who reviews AI-assisted output, at what stage, and with what level of scrutiny — remain uneven across the profession.

Some firms have implemented mandatory human review protocols for any AI-generated content before it reaches a court filing. Others have restricted the use of general-purpose models in favor of legal-specific platforms that cross-reference outputs against verified databases. The effectiveness of these measures varies, and the Sullivan & Cromwell case raises the question of whether even robust internal controls can fully account for the probabilistic nature of generative AI outputs.

Courts, meanwhile, face their own governance challenge. The patchwork of local rules and standing orders addressing AI use in litigation lacks uniformity. Whether federal courts move toward a standardized disclosure or certification requirement remains an open question — one that cases like this make harder to defer.

What sits in tension is clear enough: the legal profession's economic incentive to adopt AI tools that accelerate workflow against its foundational obligation to the accuracy of every statement made to a court. How firms and courts calibrate that tension — through technology, process, or regulation — will shape the profession's relationship with generative AI for years to come. Sullivan & Cromwell's apology is a data point, not a resolution.

With reporting from Bloomberg — Technology.

Source · Bloomberg — Technology