The capital requirements of the generative AI era are reaching a scale that blurs the line between venture investment and industrial infrastructure. Amazon has announced an additional $5 billion investment in Anthropic, the San Francisco-based developer of the Claude AI models, bringing its total commitment to the startup to $13 billion. The deal is more than a simple cash infusion; it is a strategic tethering that mandates Anthropic use Amazon's proprietary Trainium and Inferentia chips to train and deploy its future models.

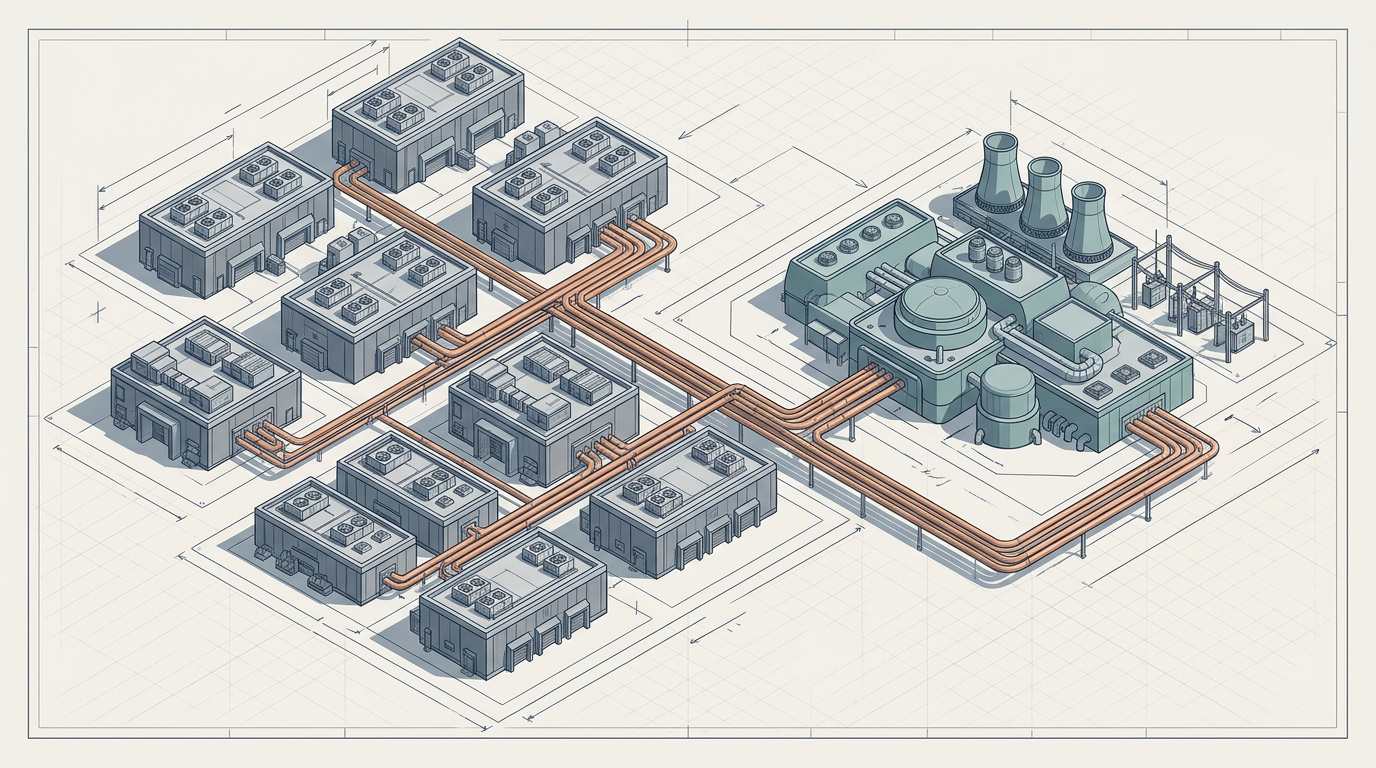

This deepening partnership arrives at a moment of operational friction for Anthropic. While Claude has garnered a reputation for nuanced reasoning and "constitutional" safety — a framework in which AI behavior is guided by a set of explicit principles rather than purely by human feedback — its success has outpaced its hardware capacity. Recent months have seen frequent performance lags and service outages as the startup struggled to manage a surge in paid subscribers. By securing up to five gigawatts of compute power through Amazon's data centers, Anthropic is effectively buying the stability required to compete with OpenAI and Google.

The Silicon Leverage

For Amazon, the deal provides a high-profile validation of its custom silicon. Trainium, designed for model training, and Inferentia, optimized for running inference — the process of generating outputs from a trained model — represent Amazon Web Services' long-running effort to reduce the AI industry's near-total dependence on Nvidia's GPU ecosystem. By moving Anthropic away from reliance on industry-standard Nvidia chips, Amazon is positioning its own hardware as a viable alternative for the most demanding workloads in the world.

The strategic logic mirrors a pattern familiar in cloud computing. Hyperscale providers have historically sought to vertically integrate wherever possible, designing custom networking equipment, storage systems, and now accelerators. Google's Tensor Processing Units set the precedent over a decade ago, demonstrating that purpose-built silicon could compete with general-purpose GPUs for specific machine learning tasks. Amazon's gambit with Anthropic follows the same playbook but raises the stakes: rather than merely offering custom chips as an option on its cloud platform, it is making them a contractual requirement for one of the most prominent AI companies in the world.

The arrangement includes a provision for an additional $20 billion in future funding, contingent on commercial milestones — a figure that underscores the staggering cost of staying relevant in the high-stakes race for increasingly capable AI systems. If those milestones are met, Amazon's total financial exposure to Anthropic could eventually reach $33 billion, a sum that would dwarf most venture investments in history and place the relationship closer to the territory of joint ventures or captive subsidiaries.

Dependency as Strategy

The deal raises a structural question that extends beyond the two parties involved. As the cost of training frontier models continues to climb, the number of entities capable of financing such work narrows. Anthropic, despite its rapid growth, faces the same constraint that has pushed nearly every leading AI lab into the orbit of a hyperscaler. OpenAI's relationship with Microsoft follows a broadly similar pattern: capital in exchange for cloud exclusivity and platform integration.

What distinguishes the Amazon-Anthropic arrangement is the hardware mandate. Tying model development to a specific chip architecture introduces switching costs that deepen over time. Models optimized for Trainium's instruction set and memory architecture become progressively harder to port to alternative hardware without performance penalties. For Anthropic, this trade-off purchases immediate relief from infrastructure bottlenecks. For Amazon, it creates a form of lock-in that grows more durable with each training run.

The tension embedded in this arrangement is worth watching. Anthropic has built its public identity around safety research and independence of judgment — qualities that sit uneasily alongside deep financial and operational dependence on a single corporate patron. Whether that independence can survive the gravitational pull of $13 billion, with the possibility of $20 billion more, is not a question of principle alone. It is a question of architecture — both computational and organizational — and the answer will be shaped by decisions that are already being made in the design of the next generation of Claude models.

With reporting from Ars Technica.

Source · Ars Technica