The Industrialization of Deception

Since the public debut of ChatGPT in late 2022, the utility of large language models has undergone a rapid, illicit translation. What began as a curiosity for generating human-like prose has become foundational infrastructure for cybercriminals. By leveraging generative AI, bad actors have moved well beyond the clumsy, grammatically suspect phishing emails of the past, replacing them with sophisticated, targeted campaigns and hyperrealistic deepfakes designed to bypass even the most skeptical human filters. The shift is structural: the economics of cybercrime have changed, and the consequences are only beginning to surface.

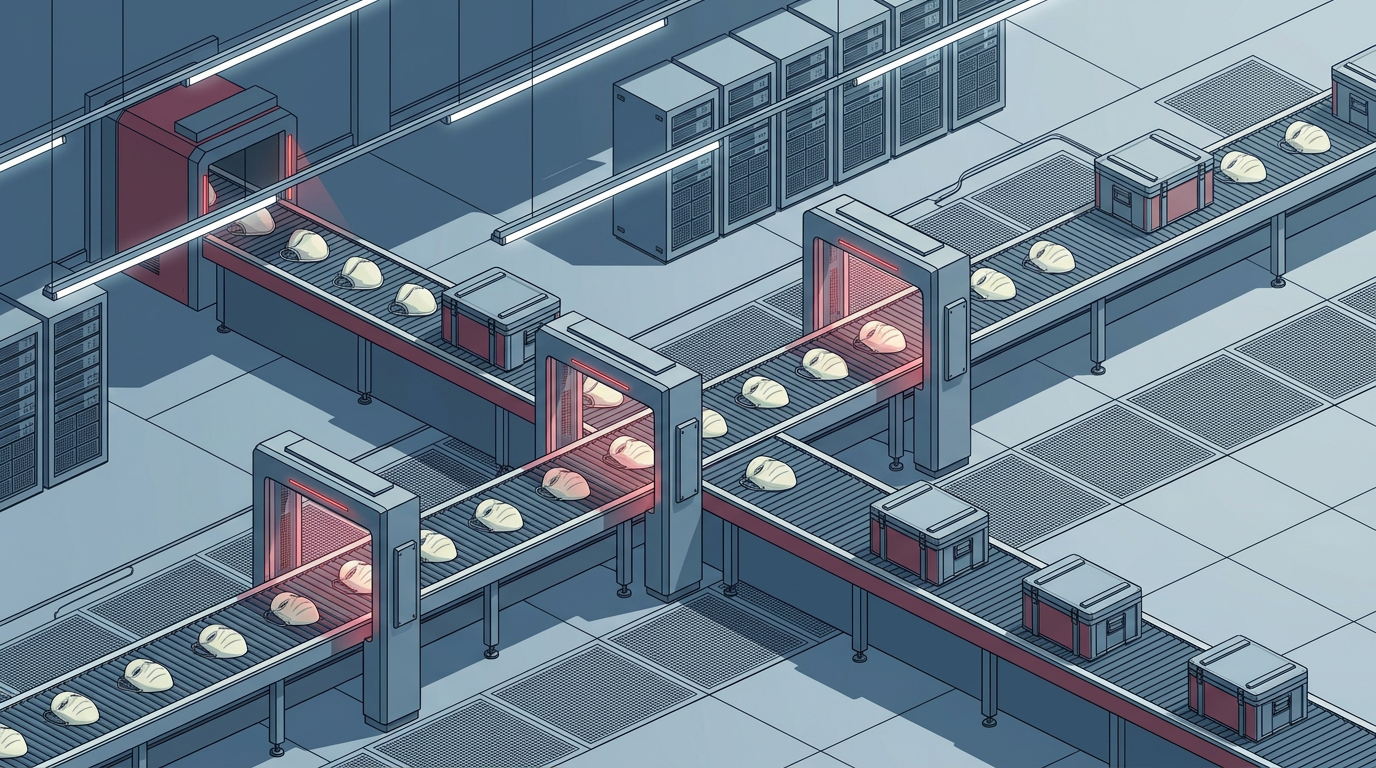

For years, the limiting factor in most cyberattacks was labor. Crafting a convincing spear-phishing email required language fluency, cultural knowledge, and patience. Building polymorphic malware demanded genuine programming skill. Social engineering at scale was expensive. Generative AI has compressed or eliminated each of these bottlenecks, turning what were once artisanal operations into something closer to industrial production lines.

From craft to factory floor

The impact of AI on the digital underworld is less about a single breakthrough and more about the industrialization of the entire exploit lifecycle. Criminals are now deploying AI to obfuscate malware code, making it harder for traditional signature-based security software to detect. They are automating the tedious process of scanning networks for vulnerabilities — work that previously required hours of manual reconnaissance. Once inside a system, AI tools can rapidly parse through vast volumes of stolen data to identify the most lucrative assets, effectively turning a messy data breach into a streamlined extraction process.

Deepfakes represent a particularly potent vector. Voice cloning and video synthesis have reached a fidelity threshold where they can convincingly impersonate executives, family members, or government officials in real time. The technique is not new in concept — social engineering has always relied on impersonation — but the cost of producing a convincing fake has collapsed. What once required a film studio's worth of equipment can now be accomplished with consumer hardware and open-source models. The result is a category of fraud that scales in ways traditional con artistry never could.

This pattern echoes a broader historical dynamic. Each wave of communication technology — the telephone, email, the early web — was rapidly adopted by criminal enterprises precisely because it lowered transaction costs for deception. Generative AI follows the same logic, but at a pace and scale that outstrips previous cycles.

The barrier collapses

This technological shift has significantly lowered the barrier to entry for aspiring attackers. Interpol has raised alarms regarding scam centers in Southeast Asia that use inexpensive AI tools to manage high volumes of victims and pivot their operations with unprecedented speed. The implication is that cybercrime is no longer the exclusive domain of technically sophisticated groups. A relatively unskilled operator, armed with the right AI toolkit, can now mount campaigns that would have required a dedicated team just a few years ago.

The defensive side of the equation faces an asymmetry problem. Security vendors are integrating AI into their own detection systems, but defenders must protect every possible entry point, while attackers need only find one. Automated vulnerability scanning tips that balance further: the attacker's reconnaissance loop tightens from days to minutes, compressing the window in which a patch or mitigation can be deployed. Regulatory frameworks, meanwhile, remain largely oriented around the previous era of threats — focused on data breach notification and compliance checklists rather than the adaptive, AI-augmented campaigns now emerging.

As these tools become cheaper and more accessible, the threat landscape is shifting from manual, targeted attacks to a persistent, automated siege. The question facing the cybersecurity industry, regulators, and the organizations caught in between is whether defensive capabilities can scale at the same rate — or whether the economics of AI-powered offense have tilted the field in a direction that traditional security architectures were never designed to handle.

With reporting from MIT Technology Review.

Source · MIT Technology Review