The impulse to protect children from the darker corners of the internet has increasingly manifested as a series of legislative walls. From Australia to the United Kingdom, and more recently in Greece, policymakers are racing to implement age-based restrictions on social media. The Council of Europe, however, is now urging a more measured approach. In a set of guidelines adopted on April 8, the intergovernmental body argues that blanket bans may inadvertently compromise the fundamental digital rights of minors while failing to actually secure their safety.

The Council's position centers on a tension that has defined digital policy debates for over a decade: how to balance child protection with the preservation of rights that democratic societies consider non-negotiable. Total exclusion from digital platforms, the guidelines warn, risks infringing upon a child's freedom of expression and their access to essential information. The concern is not merely philosophical but systemic — and it arrives at a moment when several national legislatures appear to be converging on prohibition as the default answer.

The migration problem

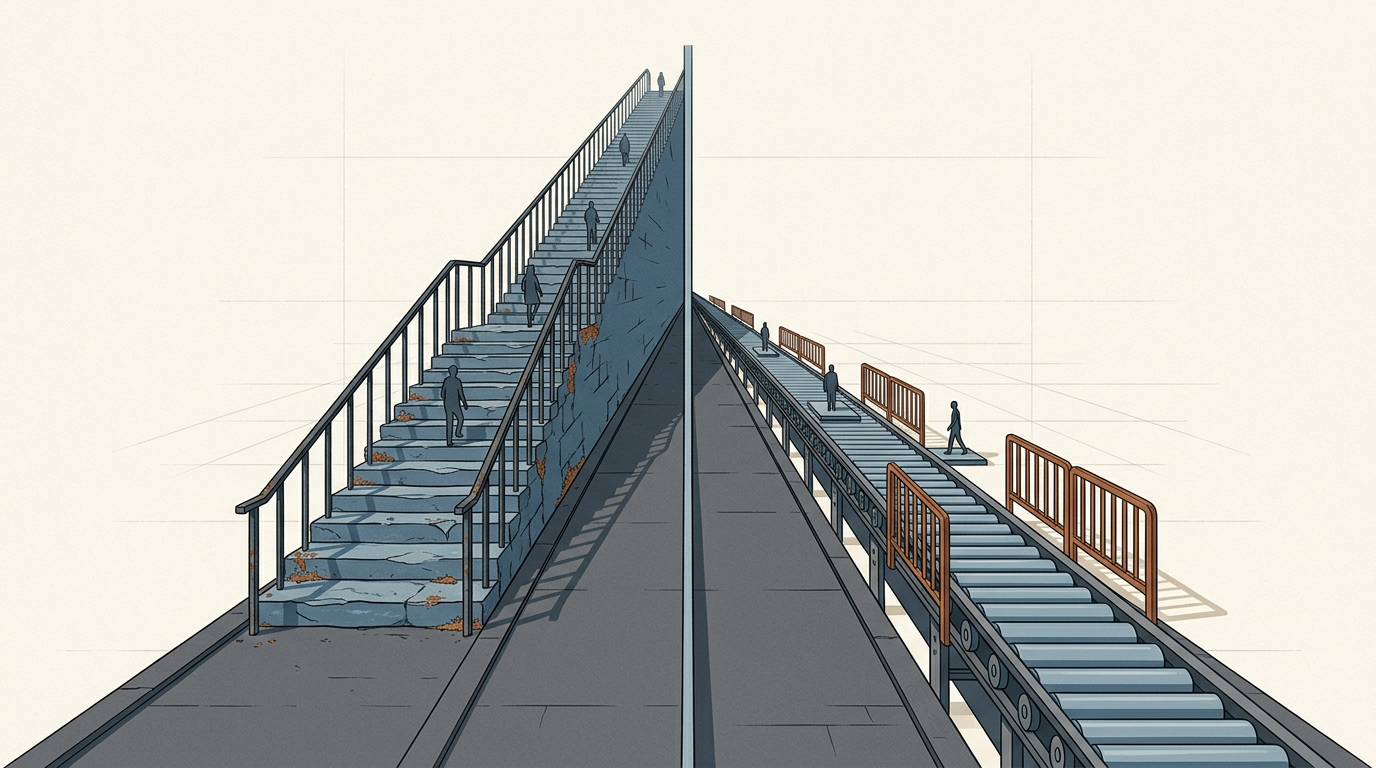

The most immediate practical objection to blanket bans is displacement. When mainstream platforms are barred to young users, the evidence from prior regulatory efforts in other domains suggests that demand does not disappear — it migrates. In the case of social media, that migration tends to push minors toward less-regulated environments: encrypted messaging groups, offshore platforms with minimal content moderation, or corners of the web where established safety tools and reporting mechanisms are absent. The paradox is stark. A policy designed to reduce exposure to harm may instead funnel vulnerable users toward spaces where exploitation is harder to detect and intervention is slower to arrive.

This dynamic is not unique to digital regulation. Prohibition-era alcohol policy in the United States, restrictions on certain online marketplaces, and even content-blocking regimes in authoritarian states have all demonstrated a recurring pattern: when access to a mainstream channel is severed without addressing underlying demand, shadow alternatives proliferate. The Council of Europe's guidelines implicitly invoke this logic, framing the question not as whether children should be protected, but whether exclusion is the mechanism most likely to achieve that protection.

The scientific community and advocacy organizations have raised parallel concerns. Groups such as Save the Children have pointed out that rushed policy decisions often ignore what some researchers describe as the "digital lifeline" that platforms provide. For marginalized youth — including those in rural areas, those navigating questions of identity, or those living in abusive households — the internet is frequently the only space where specialized support and community can be found. Removing access does not remove the need; it dismantles the safety net while leaving the underlying vulnerability intact.

Rights, design, and the regulatory middle ground

The Council of Europe's intervention reframes the debate in terms that are harder for legislators to dismiss. Rather than positioning child safety and digital access as opposing forces, the guidelines suggest they are interdependent. A child who cannot access age-appropriate information about mental health, civic participation, or personal safety is not necessarily safer — merely less informed.

This framing also shifts responsibility. If blanket bans are inadequate, the burden falls more heavily on platform design, algorithmic transparency, and enforcement of existing standards. The question becomes whether technology companies can be compelled to build environments that are safe by default for younger users, rather than relying on governments to wall off entire populations from digital participation. Several jurisdictions have begun exploring design-code approaches — regulatory frameworks that mandate safety features at the product level rather than restricting access at the user level. The United Kingdom's Age Appropriate Design Code, introduced in an earlier phase of this debate, represents one such experiment.

What remains unresolved is whether the political incentive structure favors nuance. Age-based bans are legislatively simple, publicly popular, and easy to communicate. Design-code regulation is technically complex, slower to implement, and harder to enforce across borders. The Council of Europe's guidelines may be analytically sound, but they ask lawmakers to choose the harder path at a moment when the easier one carries significant electoral appeal.

The tension, then, is not between those who care about children and those who do not. It is between two theories of protection — one rooted in exclusion, the other in supervised inclusion — and neither has yet produced definitive evidence of superiority. How that tension resolves will shape not only digital policy for minors but the broader relationship between democratic states and the platforms their citizens inhabit.

With reporting from Olhar Digital.

Source · Olhar Digital