For years, the tech industry has offered a moral trade-off: the vast energy consumption and cultural disruption of large-scale artificial intelligence are the necessary costs for a future of radical discovery. This rhetoric — suggesting that algorithmically generated content and chatbots are merely the byproduct of a quest to cure cancer or solve climate change — is now being tested as the industry's leaders pivot toward "AI co-scientists." These systems are designed to move beyond simple clerical assistance, such as drafting papers or summarizing literature, toward the autonomous generation of hypotheses and experimental design.

The prestige of this pursuit reached a high-water mark with Google DeepMind's Nobel Prize in 2024, awarded for AlphaFold's ability to predict protein structures. That success transformed scientific AI from a niche academic interest into a competitive frontier. Google has since released dedicated co-scientist tools, while Anthropic has introduced features specifically tuned for biological research, signaling a shift from general-purpose models to specialized instruments for the laboratory.

From tool to agent

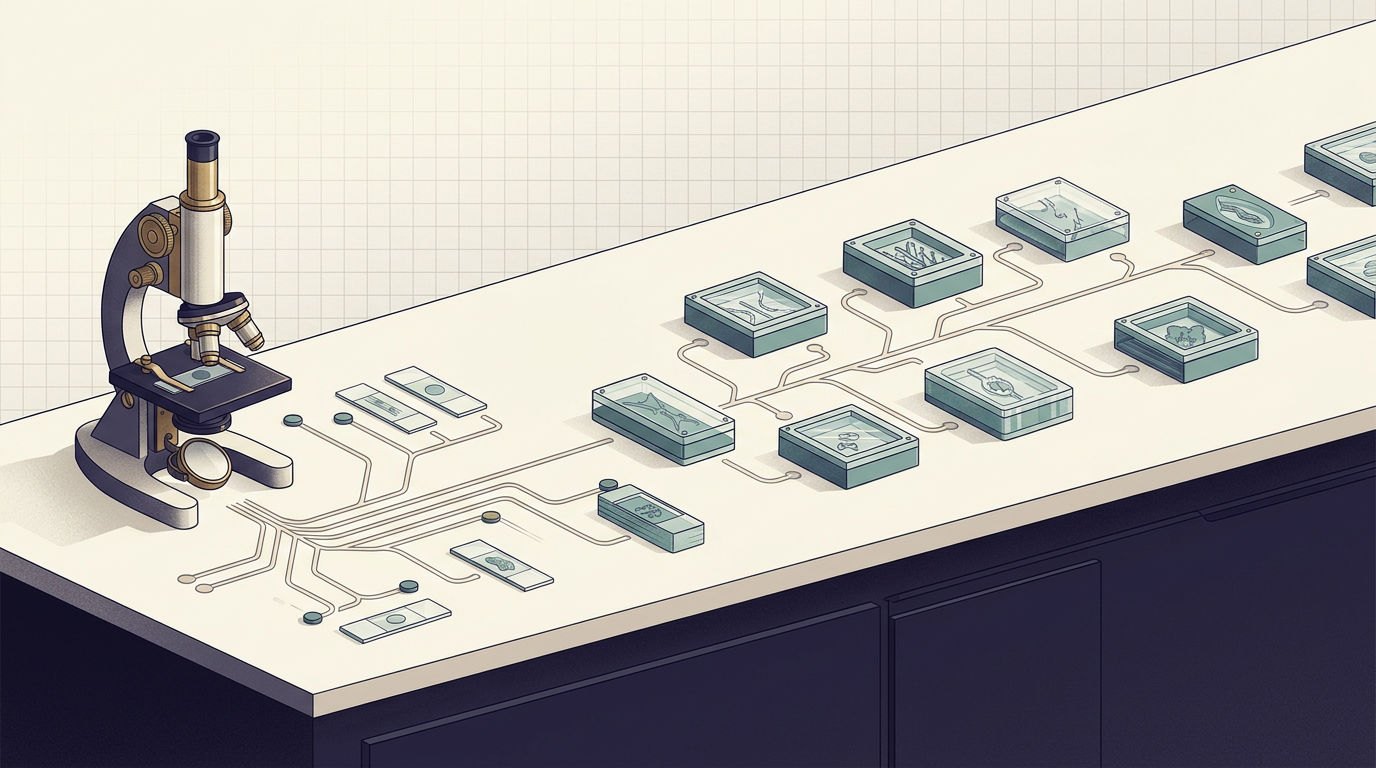

The distinction between an AI tool and an AI agent is not merely semantic. A tool responds to a prompt; an agent initiates, iterates, and adapts. The ambition behind the current wave of scientific AI is to cross that threshold — to build systems capable of identifying gaps in existing research, formulating testable hypotheses, and designing experiments to evaluate them, all with minimal human oversight.

OpenAI has identified the creation of an autonomous researcher as its "North Star," recently launching GPT-Rosalind as the first in a series of specialized scientific models. The naming itself is instructive: Rosalind Franklin's contributions to the discovery of DNA's structure were famously underrecognized during her lifetime, and the choice signals both reverence for scientific labor and a claim to its legacy. The ambition is to create a digital peer capable of navigating the complexities of the scientific method — not as a replacement for human researchers, but as an entity that can operate at a pace and scale no individual lab could match.

This trajectory has precedent beyond AlphaFold. DeepMind's earlier work on AlphaGo demonstrated that narrow AI systems could develop strategies that surprised even domain experts. The leap from mastering board games to mastering open-ended scientific inquiry, however, is qualitatively different. Games have fixed rules and clear win conditions. Science operates in a landscape where the rules themselves are often unknown, where negative results carry information, and where the framing of a question can matter as much as its answer.

The legitimacy question

For the major AI laboratories, the pivot toward scientific agents serves a dual purpose. On the technical side, scientific discovery offers a benchmark that is harder to dismiss than chatbot fluency or image generation. A model that contributes to a genuine breakthrough — a new material, a viable drug target, a climate intervention mechanism — produces value that is concrete and measurable in ways that consumer applications are not.

On the institutional side, the scientific framing addresses a growing credibility gap. The infrastructure required to train and run frontier models — the data centers, the energy consumption, the water usage for cooling — has drawn sustained scrutiny. Positioning these investments as the scaffolding for scientific progress reframes the cost structure as research expenditure rather than commercial overhead. Whether that framing holds depends on whether the outputs justify the inputs.

The history of automation in research offers a cautionary parallel. High-throughput screening in pharmaceutical development, introduced in the 1990s, promised to accelerate drug discovery by orders of magnitude. It delivered efficiency gains in certain stages of the pipeline, but did not fundamentally alter the rate at which new drugs reached patients. The bottleneck was never the speed of screening alone — it was the quality of the questions being asked and the biological complexity downstream. AI co-scientists face a similar test: speed and scale are necessary but insufficient conditions for discovery.

What remains unresolved is where human judgment becomes irreplaceable and where it becomes a bottleneck. The companies building these systems have strong incentives to emphasize the latter framing. The scientific community, which stands to gain from better tools but risks losing authorship and interpretive authority, has reason to insist on the former. The tension between those two positions — not the technology itself — may determine how autonomous science actually develops.

With reporting from MIT Technology Review.

Source · MIT Technology Review