In the world of semiconductors, heat is the ultimate tax — a byproduct of computation that must be managed, vented, or cooled at great expense. Data centers alone consume enormous amounts of energy on thermal management, and as artificial intelligence workloads grow, so does the thermal burden. A team at MIT's Institute for Soldier Nanotechnologies is now proposing a conceptual inversion: treating waste heat not as a nuisance, but as a medium for computation itself. By using temperature gradients instead of electrical pulses, the researchers have demonstrated a form of analog computing that functions without a traditional power source.

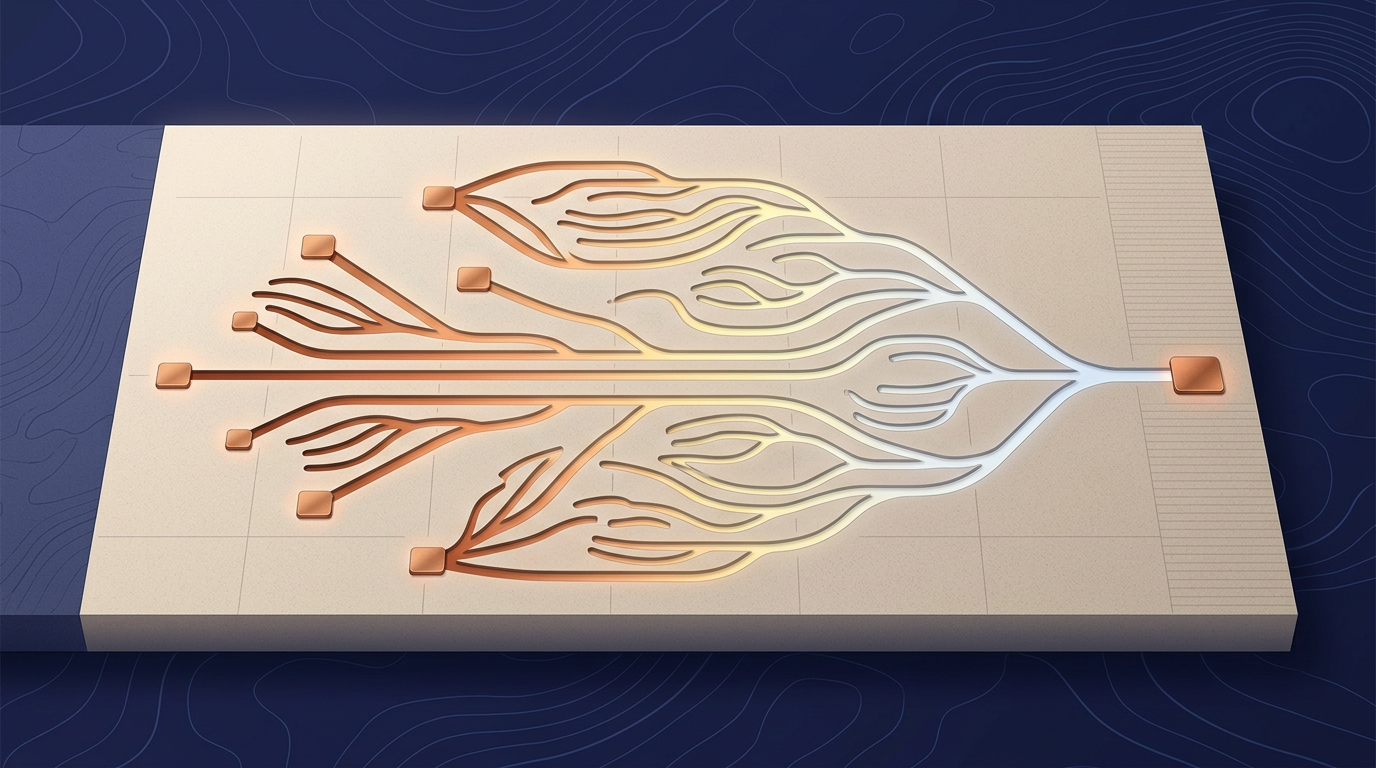

The system encodes data as a set of temperatures rather than binary ones and zeros. Using tiny silicon structures shaped by a physics-based optimization algorithm, the team directs the flow of heat to perform matrix-vector multiplication — the mathematical bedrock of modern machine learning and large language models. The output is read as the power collected at the terminal end. In initial tests, this thermal logic achieved accuracy rates exceeding 99%, suggesting that the physics of heat transfer can reliably mirror the logic of binary code.

Analog computing's quiet return

The MIT work sits within a broader resurgence of interest in analog computing. For decades, digital logic dominated because of its precision, scalability, and the relentless improvements described by Moore's Law. But as transistor scaling slows and energy costs climb — particularly for AI inference workloads — researchers have revisited analog approaches that trade perfect precision for radical gains in energy efficiency. Optical matrix multipliers, memristor crossbar arrays, and now thermal logic all share a common thesis: certain mathematical operations can be performed more cheaply by exploiting the physics of a substrate directly, rather than abstracting everything into voltage-gated switches.

What distinguishes the MIT approach is the substrate itself. Light and resistance have both been explored as analog computing media, but heat has historically been regarded as pure entropy — something to be dissipated, never harnessed. The conceptual leap here is that thermal gradients, which already exist as a waste product in every operating chip, carry latent computational potential. If that potential can be captured even partially, the energy arithmetic of computing changes. A portion of the power budget currently spent fighting heat could, in theory, be redirected into useful work.

The physics-based optimization algorithm used to shape the silicon structures is also notable. Rather than designing circuits by hand, the researchers let the mathematics of heat conduction dictate the geometry. This is a design methodology increasingly common in photonics and metamaterials — inverse design, where the desired output defines the structure rather than the other way around.

The gap between demonstration and deployment

Despite the breakthrough, the path to commercial integration remains steep. Scaling the technology to handle the millions of operations required by modern deep-learning models is a significant hurdle; as matrices grow more complex, the thermal signals lose precision over distance. Heat diffuses, and diffusion is inherently lossy. Where electrical signals can be amplified and routed with minimal degradation across a chip, thermal signals attenuate quickly. This places a practical ceiling on the size and depth of computations the system can perform in its current form.

Yet the immediate utility may lie not in replacing GPUs but in "zero-power" sensing. By repurposing the ambient heat already present in electronics, these structures could detect thermal anomalies or monitor device health without drawing a single watt of additional electricity. Edge devices, wearable electronics, and military hardware — the Institute for Soldier Nanotechnologies' core mandate — are environments where every milliwatt matters and waste heat is abundant. A sensor that feeds on its own thermal environment rather than a battery has obvious appeal in contexts where maintenance access is limited or power budgets are fixed.

The broader question is whether thermal logic can move beyond niche sensing into meaningful computation. Analog systems have historically struggled with the same problem: they work elegantly at small scale and degrade ungracefully as complexity rises. The 99% accuracy figure is encouraging for small matrices, but modern transformer models operate on parameter spaces many orders of magnitude larger. Whether inverse-designed thermal structures can maintain fidelity at that scale — or whether hybrid architectures combining thermal preprocessing with digital logic could capture some of the efficiency gains — remains an open engineering problem, not a settled one.

The tension is clear: the physics is sound, the energy proposition is attractive, and the substrate is literally free. The constraint is that heat, by its thermodynamic nature, resists the kind of precise control that computation demands. How far that constraint can be engineered around will determine whether thermal logic remains an elegant laboratory demonstration or becomes a practical layer in the computing stack.

With reporting from MIT Technology Review.

Source · MIT Technology Review