The primary obstacle to universal quantum computing has long been the fragility of the qubit. Unlike classical bits, which settle comfortably into a definitive one or zero, qubits exist in a delicate state of superposition — a condition that allows them to represent multiple values simultaneously but leaves them acutely vulnerable to the slightest environmental interference. Magnetic fields, thermal fluctuations, stray photons, even minor mechanical vibrations can collapse a qubit's quantum state, a phenomenon known as decoherence. In practical terms, decoherence sets a timer on how long a quantum processor can perform useful work before its data dissolves into noise. Extending that timer is arguably the central engineering challenge of the field.

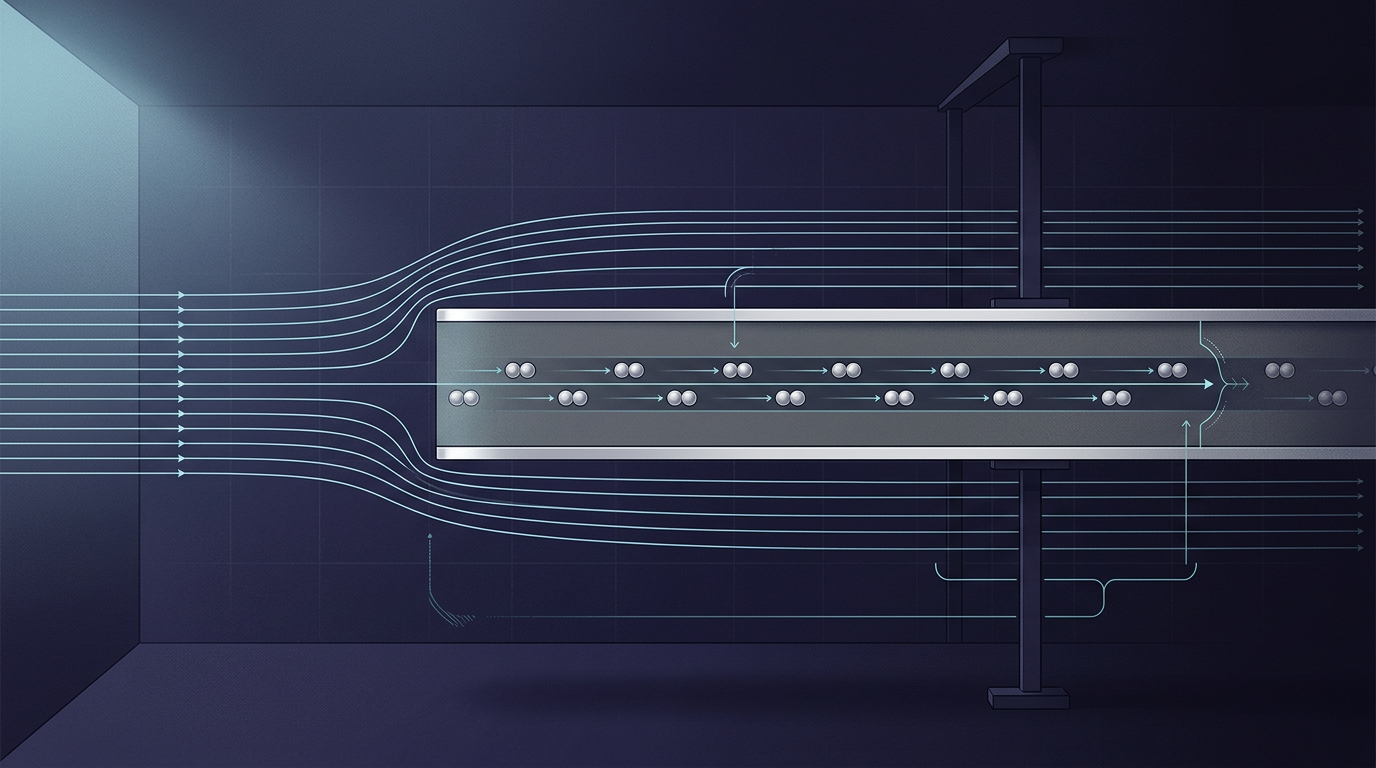

Researchers at Chalmers University of Technology in Sweden have introduced a potential solution through the development of what they call "giant superatoms." These engineered structures are designed to interact with light and matter on a significantly larger physical and electromagnetic scale than conventional atoms. By occupying more space, the superatoms function as a robust buffer, effectively shielding the quantum system from the external chaos that typically causes data loss. The shielding effect allows qubits to maintain their coherence for longer periods — a prerequisite for the complex, sustained calculations that a universal quantum computer would need to perform.

Why decoherence remains the bottleneck

The quantum computing industry has made substantial progress in recent years on qubit count, gate fidelity, and error-correction protocols. Yet decoherence continues to impose hard limits on what current hardware can accomplish. Most leading architectures — superconducting circuits, trapped ions, photonic systems — face some version of the same problem: the quantum states that encode information are extraordinarily sensitive to their surroundings. Error-correction schemes can compensate to a degree, but they do so at enormous overhead, requiring many physical qubits to sustain a single logical qubit. The ratio varies by platform, but the cost is steep enough that scaling to millions of reliable logical qubits remains a distant prospect under current approaches.

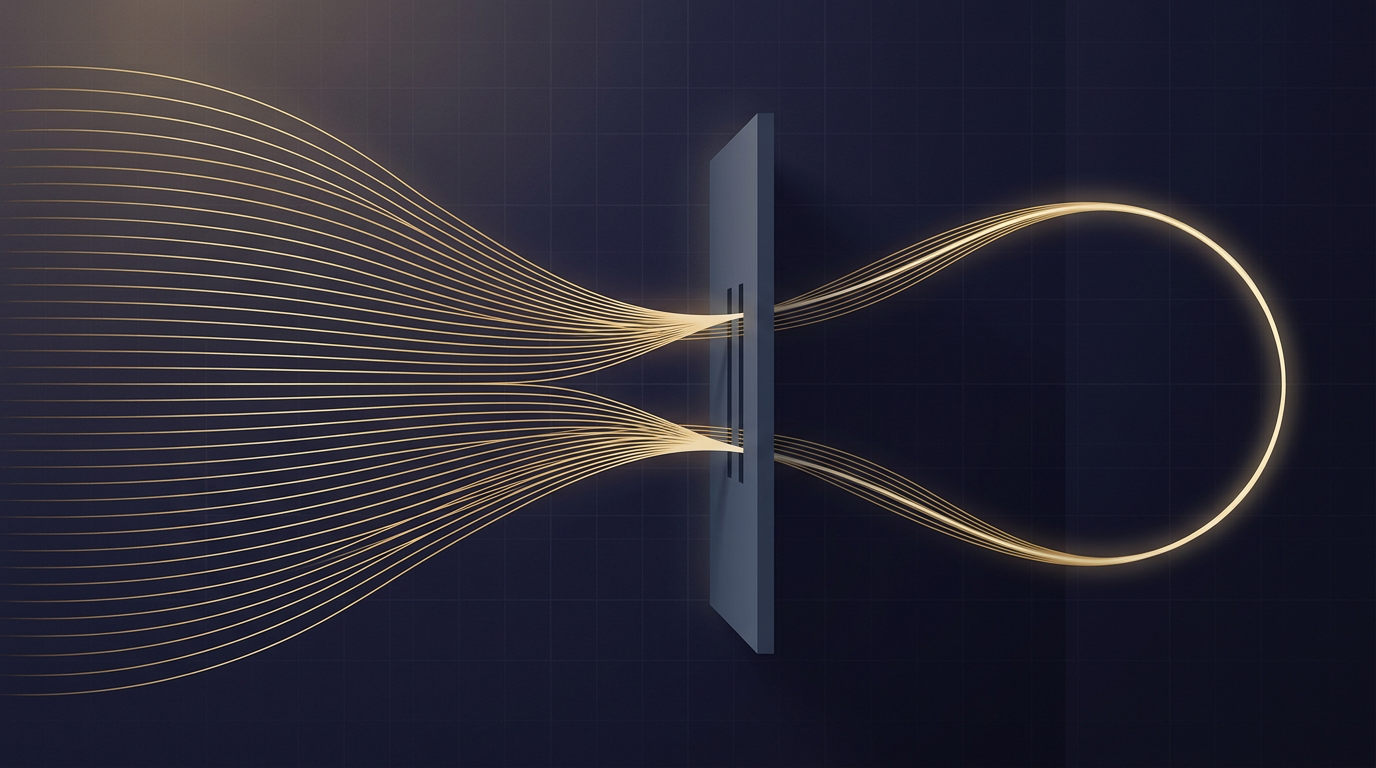

This is the context in which the Chalmers research acquires its significance. Rather than relying solely on software-level error correction after decoherence has already begun to erode the signal, the giant superatom approach attempts to suppress the problem at the hardware level. The concept draws on a well-established principle in atomic physics: atoms can be engineered to couple with electromagnetic fields in ways that either amplify or dampen specific interactions. By scaling that coupling to a much larger footprint, the Chalmers team has created structures that act as a kind of electromagnetic moat around the qubit, absorbing or deflecting the environmental noise before it reaches the quantum state.

From laboratory curiosity to architectural question

The ability to integrate these superatoms directly into quantum chips is a notable detail. Many proposals for improving qubit stability involve exotic materials, extreme isolation environments, or operating temperatures fractions of a degree above absolute zero. While the Chalmers approach does not eliminate the need for cryogenic conditions — superconducting qubits inherently require them — it adds a layer of protection that could relax some of the engineering tolerances currently demanded of quantum hardware. If the shielding proves effective at scale, it could reduce the number of physical qubits required per logical qubit, a leverage point with cascading implications for cost, complexity, and timeline.

The research is still in the experimental phase, and the distance between a laboratory demonstration and a production-ready component is considerable. Quantum computing has seen no shortage of promising results that struggled to survive the transition from controlled environments to integrated systems. The history of the field is littered with breakthroughs that solved one variable while introducing new constraints elsewhere.

Still, the Chalmers work represents an approach that differs in kind from the dominant strategy of compensating for noise through redundancy. It asks whether the environment itself can be managed at the physical layer, rather than corrected for at the logical layer. Whether hardware-level shielding and software-level error correction prove to be complementary — or whether one approach ultimately renders the other unnecessary — is a question the field has not yet resolved. The answer will shape not only the architecture of future quantum processors but also the economics of who can afford to build them.

With reporting from Olhar Digital.

Source · Olhar Digital